Install DeepSeek R1 on your VPS: Your Cost-Free Guide to Maximum Efficiency!

DeepSeek R1 is an advanced AI model designed for various applications, including chatbot functionalities. Installing it on your Ubuntu VPS with 6GB RAM allows you to leverage AI capabilities locally, eliminating the need for costly cloud services. This guide will walk you through the installation process step by step, ensuring a smooth setup and optimal performance.

DeepSeek R1 is an AI model that offers powerful functionalities, making it ideal for applications like chatbots, natural language processing, and more. By installing DeepSeek R1 on your Ubuntu VPS, you can harness its capabilities without incurring the costs associated with cloud-based solutions. This local installation is particularly beneficial if you’re looking to save on expenses while maintaining control over your AI environment.

Using the Racknerd 8GB VPS Offer I’ve mentioned in my previous articles, it’s a good fit to run the small 1.5B DeepSeek R1 model.

Why You Should Run AI Models Locally

Imagine this: every time you engage with an AI online, you’re sending your thoughts, ideas, and questions into a black box owned by someone else. You trust that they have your best interests at heart, but do you really know where that data goes? When you use popular platforms like ChatGPT or DeepSeek, your information is no longer yours; it’s in their hands.

Now, let’s talk about DeepSeek. Their servers are nestled in China, a place with cybersecurity laws that can grant authorities sweeping access to data. This means that anything you share could potentially fall under the scrutiny of local laws that are quite different from what you might expect. It’s a sobering thought, isn’t it?

The bigger picture here isn’t just about DeepSeek or any one service; it’s about your privacy and control over your own data. When you store your information on remote servers, it becomes vulnerable to the legal frameworks of whatever country those servers are in.

But here’s the good news: running AI models locally changes everything. By keeping your interactions on your own device, you take back control. Your thoughts and data stay private, cocooned within the safety of your hardware.

This shift towards local AI isn’t just a trend; it’s a powerful move toward data sovereignty. It empowers you to harness cutting-edge technology without sacrificing your privacy. In a world where your personal information can feel like currency, maintaining confidentiality is not just smart—it’s essential.

So, consider this: why hand over the keys to your data when you can keep them close? Embracing local AI solutions ensures that you can work confidently and securely with your sensitive information. It’s time to take the reins and safeguard your digital life.

System Requirements

DeepSeek requires significant computational resources primarily due to the size and complexity of its AI models. Larger models, such as those with billions of parameters, demand more processing power and memory to run efficiently. Here’s a breakdown of the key factors contributing to the resource requirements of DeepSeek:

Optimization Techniques: To make the most of available resources, especially in constrained environments like a VPS with limited RAM, techniques such as quantization and batch size adjustments can be employed. These methods help reduce memory usage and improve computational efficiency.

Model Size: DeepSeek offers models ranging from 1.5 billion parameters to 671 billion parameters. The larger the model, the more computational resources it requires. This is because each parameter in the model needs to be processed, and larger models have more parameters to manage.

Memory Requirements: Running these models necessitates a substantial amount of memory. While VRAM (Video Random Access Memory) is often used for graphics-intensive tasks, it’s also leveraged for AI computations due to its high bandwidth and efficiency in handling parallel operations. Regular RAM can be used as an alternative, but it may not provide the same level of performance.

Different Versions of DeepSeek R1:

DeepSeek R1 is an AI model available in various configurations to suit different use cases and resource availability. The different versions cater to a range of performance and resource needs.

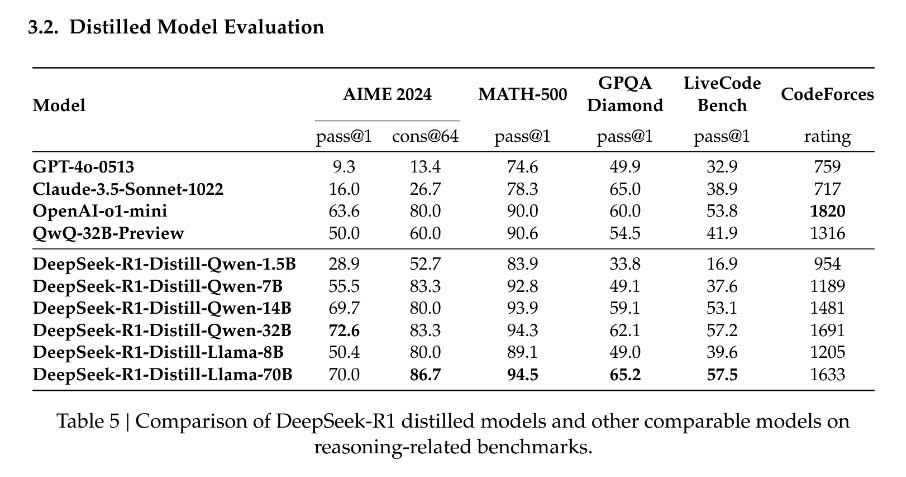

AIME 2024 GPQA Diamond, CodeForces, Livecode becn, and Math-500 refer to different datasets or evaluation benchmarks used in the context of AI model assessments, particularly in natural language processing and machine learning.

- AIME 2024 GPQA Diamond: This could refer to a specific dataset or challenge related to Generalized Question Answering (GPQA) designed for the AIME (Artificial Intelligence Model Evaluation) 2024 competition. It likely involves questions that require models to generate or retrieve answers based on a broad range of topics.

- CodeForces Livecode: This is likely associated with coding competitions where participants solve problems in real-time. The evaluation might focus on a model’s ability to understand programming problems and provide solutions or code snippets.

- Math-500: This could be a dataset containing 500 mathematical problems or questions used to assess AI models’ capabilities in solving mathematical queries. It might evaluate reasoning, calculations, and comprehension of various math concepts.

- Pass@1: This metric typically measures the proportion of instances where the model’s first predicted answer is correct. A higher Pass@1 score indicates better performance of the model in providing accurate responses on the first attempt.

- Cons@64: This metric may refer to consistency at a fixed number of samples (in this case, 64). It evaluates how consistently a model produces correct answers across multiple attempts or evaluations within that sample size. A higher Cons@64 score implies that the model maintains reliability in its responses over several inputs.

These metrics are for assessing the performance of AI models in various contexts, ensuring that they not only provide correct answers but also do so reliably across different scenarios.

You can read more about the different models and the evaluations over in the DeepSeek-R1 Release Paper.

1. DeepSeek R1 – 1.5B Parameters (recommended)

- VRAM Requirements: Approximately 4GB of VRAM is recommended for optimal performance. However, it can run on less with some adjustments.

- RAM Requirements: Around 8GB of RAM is suggested to ensure smooth operation.

- Compatibility with Regular RAM: Yes, it can run on regular RAM without dedicated VRAM, though performance may be slower.

This is the recommended version using the racknerd VPS offer, unless you’re really using a powerful VPS or you’re running it locally on a more powerful PC.

Using the Racknerd 8GB VPS Offer I’ve mentioned in my previous articles, it’s a good fit to run the small 1.5B DeepSeek R1 model.

2. DeepSeek R1 – 2.5B Parameters

- VRAM Requirements: Requires about 6GB of VRAM for optimal performance.

- RAM Requirements: Suggests 12GB of RAM for smooth operation.

- Compatibility with Regular RAM: It can run on regular RAM, but performance may be suboptimal.

3. DeepSeek R1 – 6B Parameters

- VRAM Requirements: Needs approximately 12GB of VRAM for optimal performance.

- RAM Requirements: Recommends 16GB of RAM for smooth operation.

- Compatibility with Regular RAM: While technically possible, performance will be significantly impacted without adequate VRAM.

4. DeepSeek R1 – 7B Parameters

- VRAM Requirements: Requires about 14GB of VRAM for optimal performance.

- RAM Requirements: Suggests 20GB of RAM for smooth operation.

- Compatibility with Regular RAM: Running on regular RAM is challenging and may not be feasible without substantial performance degradation.

5. DeepSeek R1 – 8B Parameters

- VRAM Requirements: Needs approximately 16GB of VRAM for optimal performance.

- RAM Requirements: Recommends 24GB of RAM for smooth operation.

- Compatibility with Regular RAM: Running on regular RAM is not practical due to high resource demands

What is Ollama?

Ollama is an open-source framework designed to make it easy to run large language models locally. It provides a user-friendly interface and command-line tools to manage and interact with AI models. Using Ollama, you can easily install, run, and manage DeepSeek R1 on your Ubuntu VPS.

To run DeepSeek on a VPS, installing Ollama is essential because it provides the necessary framework and tools to efficiently manage and execute the AI model. Ollama is designed specifically to run large language models like DeepSeek locally. It acts as a middleware that simplifies the process of deploying and managing AI models on your VPS. Ollama leverages your VPS’s hardware, including GPUs if available, to accelerate DeepSeek’s computations, improving performance.

Installation Steps

Before installing DeepSeek R1, you need to install Ollama on your Ubuntu VPS. Follow these steps to install Ollama.

1. Update the System

Start by updating your Ubuntu system to ensure all packages are up to date. This step is crucial for maintaining security and compatibility.

sudo apt update && sudo apt upgrade -y

2. Install Ollama

curl -fsSL https://ollama.com/install.sh | shIf this doesn’t work, run the following command then try again

sudo apt install curl3. Check Ollama status

After installation is complete, check the status, if running:

systemctl status ollama Or verify the version

ollama -vThis should display the version of Ollama installed.

4. Startup Ollama

ollama serveOr you can check the status and start using the following

sudo systemctl start ollama

sudo systemctl status ollamaTo ensure Ollama starts automatically on boot, enable the service:

sudo systemctl enable ollama

5. Download the DeepSeek R1 Model

Download the DeepSeek R1 model from the official repository. Ensure you select the version compatible with your VPS specifications. For the smallest version 1.5b just run:

ollama pull deepseek-r1:1.5b

Replace the 1.5b with other versions if you have a more powerful VPS or running locally on a powerful PC.

After you have downloaded it, you can check if it’s installed using

ollama listThere you will see deepseek-r1:1.5b installed.

After downloading, to start using the model, use the following command:

ollama run deepseek-r1:1.5bAfter starting the service, you can interact with DeepSeek R1 through this interface.

Conclusion

By installing DeepSeek R1 on your Ubuntu VPS with 6GB RAM, you’ve successfully set up a cost-effective AI environment. This setup not only saves you money but also provides you with the flexibility and control to tailor the AI functionalities to your specific needs.

However, keep in mind that 6GB RAM might not be enough for even the 1.5B model if you’re making complex tasks. If you want to explore more of this model you can install ollama on your own PC – it has a windows version over at https://ollama.com/download if you have enough VRAM to run stronger models.

Enjoy exploring the capabilities of DeepSeek R1 and enhancing your projects with its powerful features!

Here is an alternative installation mode: